Documentation Index

Fetch the complete documentation index at: https://docs.trymirai.com/llms.txt

Use this file to discover all available pages before exploring further.

In this example, we will get a reply to a specific list of messages from a cloud model.

- Python

- Swift

- TypeScript

- Rust

Paste into main.py

import asyncio

from uzu import ChatConfig, ChatMessage, ChatReplyConfig, Engine, EngineConfig, ReasoningEffort

async def main() -> None:

engine_config = EngineConfig.create().with_openai_api_key("OPENAI_API_KEY")

engine = await Engine.create(engine_config)

model = await engine.model("gpt-5")

if model is None:

raise RuntimeError("Model not found")

messages = [

ChatMessage.system().with_reasoning_effort(ReasoningEffort.Low),

ChatMessage.user().with_text("How LLMs work"),

]

session = await engine.chat(model, ChatConfig.create())

replies = await session.reply(messages, ChatReplyConfig.create())

if replies:

message = replies[0].message

print(f"Reasoning: {message.reasoning}")

print(f"Text: {message.text}")

if __name__ == "__main__":

asyncio.run(main())

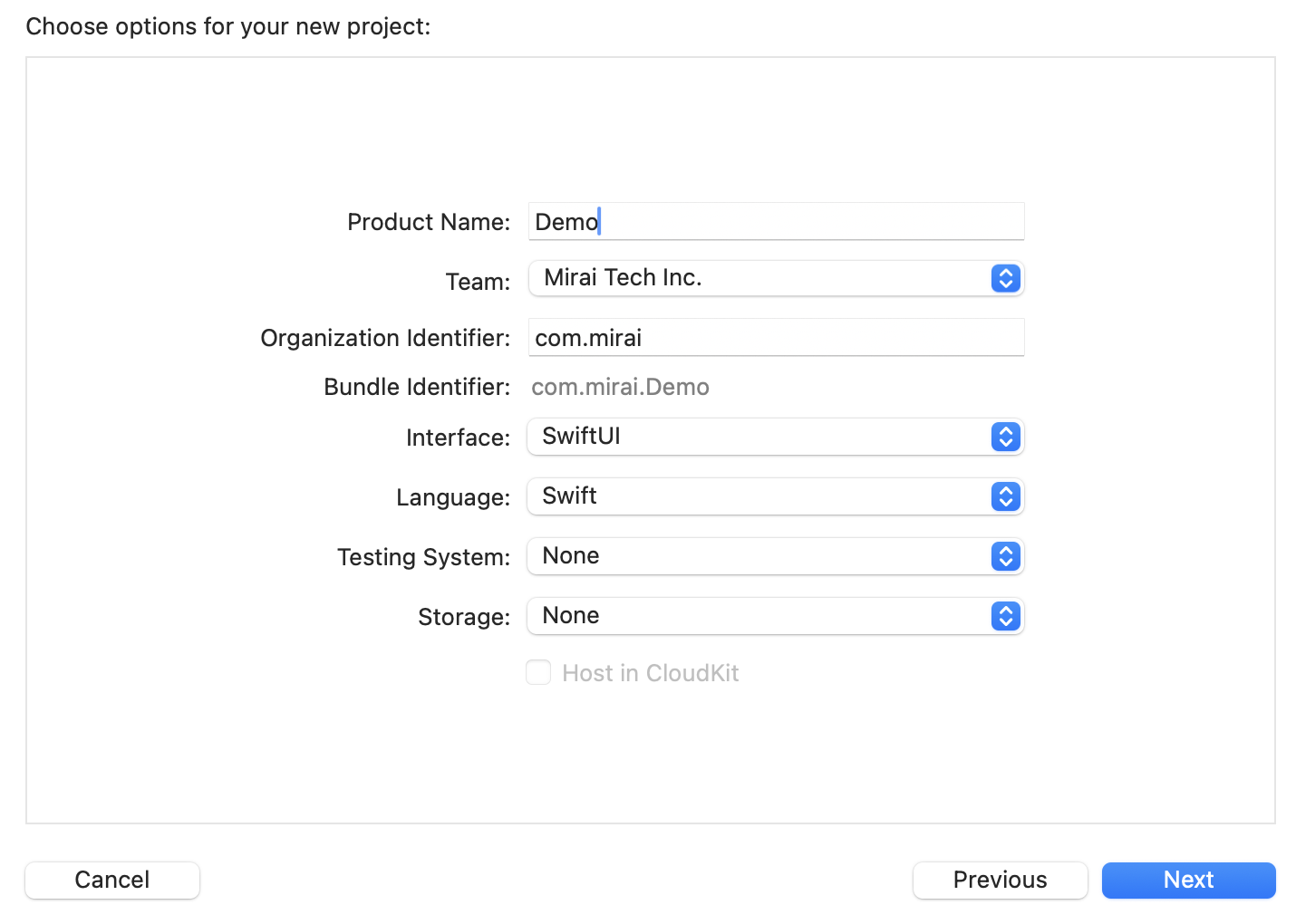

Paste the snippet

import Uzu

public func runChatCloud() async throws {

let engineConfig = EngineConfig.create().withOpenaiApiKey(openaiApiKey: "OPENAI_API_KEY")

let engine = try await Engine.create(config: engineConfig)

guard let model = try await engine.model(identifier: "Qwen/Qwen3-0.6B") else {

return

}

let messages = [

ChatMessage.system().withReasoningEffort(reasoningEffort: .low),

ChatMessage.user().withText(text: "How LLMs work")

]

let session = try await engine.chat(model: model, config: .create())

let reply = try await session.reply(input: messages, config: .create())

guard let message = reply.last?.message else {

return

}

print("Reasoning: \(message.reasoning() ?? "empty")")

print("Text: \(message.text() ?? "empty")")

}

Initialize a tsconfig.json

{

"compilerOptions": {

"target": "es2020",

"module": "commonjs",

"moduleResolution": "node",

"strict": true,

"esModuleInterop": true,

"outDir": "dist",

"types": [

"node"

]

},

"include": [

"*.ts"

]

}

Create main.ts

import { ChatConfig, ChatMessage, ChatReplyConfig, Engine, EngineConfig, ReasoningEffort } from '@trymirai/uzu';

async function main() {

let engineConfig = EngineConfig.create().withOpenaiApiKey('OPENAI_API_KEY');

let engine = await Engine.create(engineConfig);

let model = await engine.model('gpt-5');

if (!model) {

throw new Error('Model not found');

}

let messages = [

ChatMessage.system().withReasoningEffort("Low" as ReasoningEffort),

ChatMessage.user().withText('How LLMs work')

];

let session = await engine.chat(model, ChatConfig.create());

let reply = await session.reply(messages, ChatReplyConfig.create());

let message = reply[0]?.message;

if (message) {

console.log('Reasoning: ', message.reasoning);

console.log('Text: ', message.text);

}

}

main().catch((error) => {

console.error(error);

});

Install dependencies

cargo add uzu --git https://github.com/trymirai/uzu

cargo add tokio --features full

Paste into src/main.rs

use uzu::{

engine::{Engine, EngineConfig},

types::{

basic::ReasoningEffort,

session::chat::{ChatConfig, ChatMessage, ChatReplyConfig},

},

};

#[tokio::main]

async fn main() -> Result<(), Box<dyn std::error::Error>> {

let engine_config = EngineConfig::default().with_openai_api_key("OPENAI_API_KEY".to_string());

let engine = Engine::new(engine_config).await?;

let model = engine.model("gpt-5".to_string()).await?.ok_or("Model not found")?;

let messages = vec![

ChatMessage::system().with_reasoning_effort(ReasoningEffort::Low),

ChatMessage::user().with_text("How LLMs work".to_string()),

];

let session = engine.chat(model, ChatConfig::default()).await?;

let replies = session.reply(messages, ChatReplyConfig::default()).await?;

if let Some(reply) = replies.first() {

println!("Reasoning: {}", reply.message.reasoning().unwrap_or_default());

println!("Text: {}", reply.message.text().unwrap_or_default());

}

Ok(())

}