Documentation Index

Fetch the complete documentation index at: https://docs.trymirai.com/llms.txt

Use this file to discover all available pages before exploring further.

In this example, we will use the

classification speculation preset to determine the sentiment of the user’s input.- Python

- Swift

- TypeScript

- Rust

Paste into main.py

import asyncio

from uzu import (

ChatConfig,

ChatMessage,

ChatReplyConfig,

ChatSpeculationPreset,

Engine,

EngineConfig,

Feature,

ReasoningEffort,

SamplingMethod,

)

async def main() -> None:

engine_config = EngineConfig.create()

engine = await Engine.create(engine_config)

model = await engine.model("Qwen/Qwen3-0.6B")

if model is None:

raise RuntimeError("Model not found")

async for update in (await engine.download(model)).iterator():

print(f"Download progress: {update.progress}")

feature = Feature(

"sentiment",

["Happy", "Sad", "Angry", "Fearful", "Surprised", "Disgusted"],

)

chat_config = ChatConfig.create().with_speculation_preset(ChatSpeculationPreset.Classification(feature))

session = await engine.chat(model, chat_config)

text_to_detect_feature = "Today's been awesome! Everything just feels right, and I can't stop smiling."

prompt = (

f'Text is: "{text_to_detect_feature}". '

f"Choose {feature.name} from the list: {', '.join(feature.values)}. "

"Answer with one word. Don't add a dot at the end."

)

messages = [

ChatMessage.system().with_reasoning_effort(ReasoningEffort.Disabled),

ChatMessage.user().with_text(prompt),

]

chat_reply_config = ChatReplyConfig.create().with_token_limit(32).with_sampling_method(SamplingMethod.Greedy())

replies = await session.reply(messages, chat_reply_config)

if replies:

reply = replies[0]

print(f"Prediction: {reply.message.text}")

print(f"Generated tokens: {reply.stats.tokens_count_output}")

if __name__ == "__main__":

asyncio.run(main())

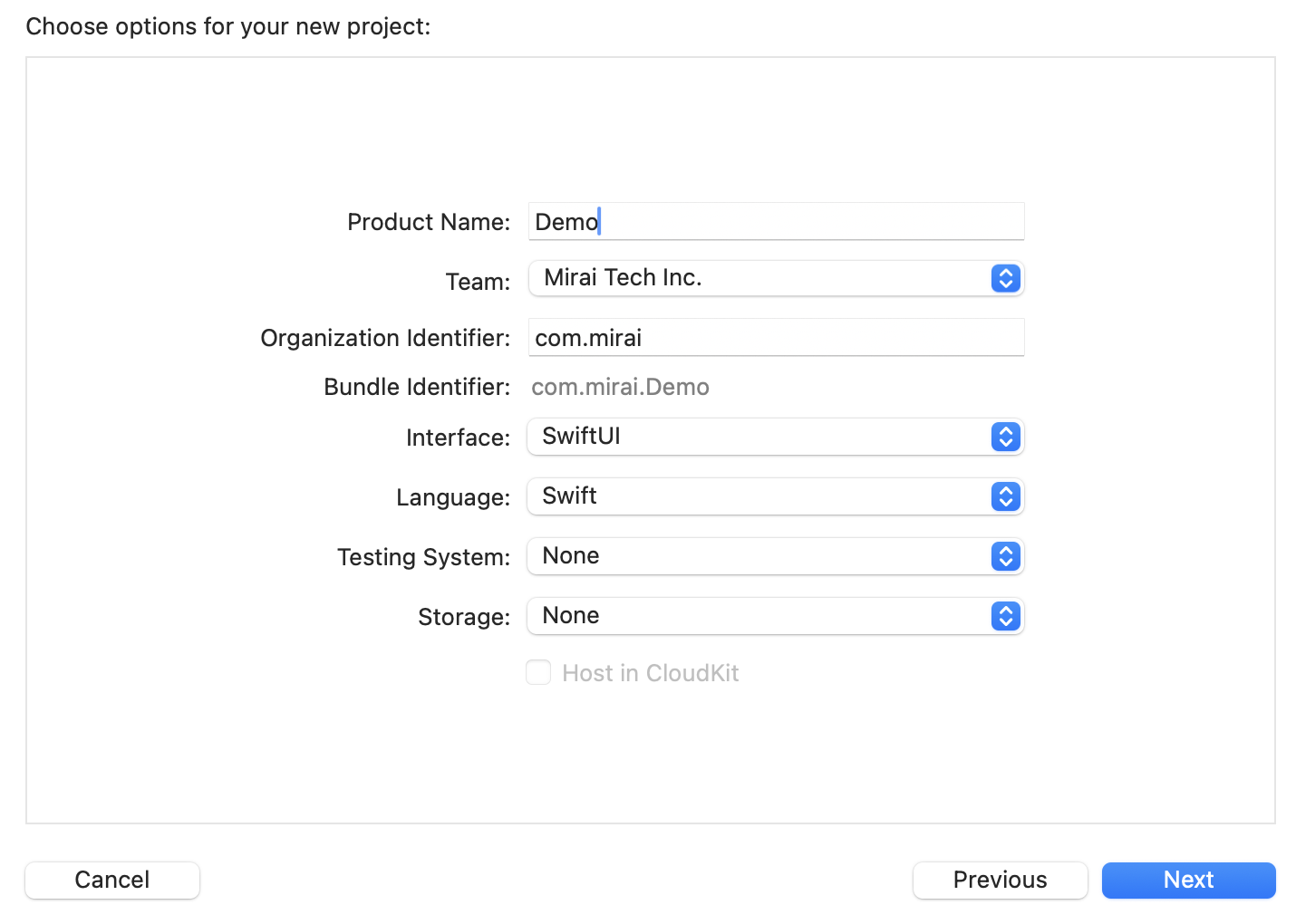

Paste the snippet

import Uzu

public func runChatSpeculationClassification() async throws {

let engineConfig = EngineConfig.create()

let engine = try await Engine.create(config: engineConfig)

guard let model = try await engine.model(identifier: "Qwen/Qwen3-0.6B") else {

return

}

for try await update in try await engine.download(model: model).iterator() {

print("Download progress: \(update.progress())")

}

let feature = Feature(name: "sentiment", values: [

"Happy",

"Sad",

"Angry",

"Fearful",

"Surprised",

"Disgusted",

])

let chatConfig = ChatConfig.create().withSpeculationPreset(speculationPreset: .classification(feature: feature))

let session = try await engine.chat(model: model, config: chatConfig)

let textToDetectFeature =

"Today's been awesome! Everything just feels right, and I can't stop smiling."

let prompt = "Text is: \"\(textToDetectFeature)\". Choose \(feature.name) from the list: \(feature.values.joined(separator: ", ")). Answer with one word. Don't add a dot at the end."

let messages = [

ChatMessage.system().withReasoningEffort(reasoningEffort: .disabled),

ChatMessage.user().withText(text: prompt)

]

let chatReplyConfig = ChatReplyConfig.create().withTokenLimit(tokenLimit: 32).withSamplingMethod(samplingMethod: .greedy)

let replies = try await session.reply(input: messages, config: chatReplyConfig)

guard let reply = replies.last else {

return

}

print("Prediction: \(reply.message.text() ?? "empty")")

print("Generated tokens: \(reply.stats.tokensCountOutput ?? 0)")

}

Initialize a tsconfig.json

{

"compilerOptions": {

"target": "es2020",

"module": "commonjs",

"moduleResolution": "node",

"strict": true,

"esModuleInterop": true,

"outDir": "dist",

"types": [

"node"

]

},

"include": [

"*.ts"

]

}

Create main.ts

import { ChatConfig, ChatMessage, ChatReplyConfig, ChatSpeculationPresetClassification, Engine, EngineConfig, Feature, ReasoningEffort, SamplingMethodGreedy } from '@trymirai/uzu';

async function main() {

let engineConfig = EngineConfig.create();

let engine = await Engine.create(engineConfig);

let model = await engine.model('Qwen/Qwen3-0.6B');

if (!model) {

throw new Error('Model not found');

}

for await (const update of await engine.download(model)) {

console.log('Download progress:', update.progress);

}

const feature = new Feature('sentiment', [

'Happy',

'Sad',

'Angry',

'Fearful',

'Surprised',

'Disgusted',

]);

let chatConfig = ChatConfig.create().withSpeculationPreset(new ChatSpeculationPresetClassification(feature));

let session = await engine.chat(model, chatConfig);

const textToDetectFeature =

"Today's been awesome! Everything just feels right, and I can't stop smiling.";

const prompt =

`Text is: "${textToDetectFeature}". Choose ${feature.name} from the list: ${feature.values.join(', ')}. ` +

"Answer with one word. Don't add a dot at the end.";

let messages = [

ChatMessage.system().withReasoningEffort("Disabled" as ReasoningEffort),

ChatMessage.user().withText(prompt)

];

let chatReplyConfig = ChatReplyConfig.create().withTokenLimit(32).withSamplingMethod(new SamplingMethodGreedy());

let reply = (await session.reply(messages, chatReplyConfig))[0];

if (reply) {

console.log('Prediction: ', reply.message.text);

console.log('Generated tokens: ', reply.stats.tokensCountOutput);

}

}

main().catch((error) => {

console.error(error);

});

Install dependencies

cargo add uzu --git https://github.com/trymirai/uzu

cargo add tokio --features full

Paste into src/main.rs

use uzu::{

engine::{Engine, EngineConfig},

types::{

basic::{Feature, ReasoningEffort, SamplingMethod},

session::chat::{ChatConfig, ChatMessage, ChatReplyConfig, ChatSpeculationPreset},

},

};

#[tokio::main]

async fn main() -> Result<(), Box<dyn std::error::Error>> {

let engine_config = EngineConfig::default();

let engine = Engine::new(engine_config).await?;

let model = engine.model("Qwen/Qwen3-0.6B".to_string()).await?.ok_or("Model not found")?;

let downloader = engine.download(&model).await?;

while let Some(update) = downloader.next().await {

println!("Download progress: {}", update.progress());

}

let feature = Feature {

name: "sentiment".to_string(),

values: vec![

"Happy".to_string(),

"Sad".to_string(),

"Angry".to_string(),

"Fearful".to_string(),

"Surprised".to_string(),

"Disgusted".to_string(),

],

};

let chat_config = ChatConfig::default().with_speculation_preset(Some(ChatSpeculationPreset::Classification {

feature: feature.clone(),

}));

let session = engine.chat(model, chat_config).await?;

let text_to_detect_feature = "Today's been awesome! Everything just feels right, and I can't stop smiling.";

let prompt = format!(

"Text is: \"{text_to_detect_feature}\". Choose {} from the list: {}. Answer with one word. Don't add a dot at the end.",

feature.name,

feature.values.join(", ")

);

let messages = vec![

ChatMessage::system().with_reasoning_effort(ReasoningEffort::Disabled),

ChatMessage::user().with_text(prompt),

];

let chat_reply_config =

ChatReplyConfig::default().with_token_limit(Some(32)).with_sampling_method(SamplingMethod::Greedy {});

let replies = session.reply(messages, chat_reply_config).await?;

if let Some(reply) = replies.first() {

println!("Prediction: {}", reply.message.text().unwrap_or_default());

println!("Generated tokens: {}", reply.stats.tokens_count_output.unwrap_or_default());

}

Ok(())

}

You can view the stats to see that the answer will be ready immediately after the prefill step, and actual generation won’t even start due to speculative decoding, which significantly improves generation speed.