Documentation Index

Fetch the complete documentation index at: https://docs.trymirai.com/llms.txt

Use this file to discover all available pages before exploring further.

In this example, we will use the

summarization speculation preset to generate a summary of the input text.- Python

- Swift

- TypeScript

- Rust

Paste into main.py

import asyncio

from uzu import (

ChatConfig,

ChatMessage,

ChatReplyConfig,

ChatSpeculationPreset,

Engine,

EngineConfig,

ReasoningEffort,

SamplingMethod,

)

async def main() -> None:

engine_config = EngineConfig.create()

engine = await Engine.create(engine_config)

model = await engine.model("Qwen/Qwen3-0.6B")

if model is None:

raise RuntimeError("Model not found")

async for update in (await engine.download(model)).iterator():

print(f"Download progress: {update.progress}")

text_to_summarize = (

"A Large Language Model (LLM) is a type of artificial intelligence that processes and generates human-like text. "

"It is trained on vast datasets containing books, articles, and web content, allowing it to understand and predict language patterns. "

"LLMs use deep learning, particularly transformer-based architectures, to analyze text, recognize context, and generate coherent responses. "

"These models have a wide range of applications, including chatbots, content creation, translation, and code generation. "

"One of the key strengths of LLMs is their ability to generate contextually relevant text based on prompts. "

"They utilize self-attention mechanisms to weigh the importance of words within a sentence, improving accuracy and fluency. "

"Examples of popular LLMs include OpenAI's GPT series, Google's BERT, and Meta's LLaMA. "

"As these models grow in size and sophistication, they continue to enhance human-computer interactions, "

"making AI-powered communication more natural and effective."

)

prompt = f'Text is: "{text_to_summarize}". Write only summary itself.'

messages = [

ChatMessage.system().with_reasoning_effort(ReasoningEffort.Disabled),

ChatMessage.user().with_text(prompt),

]

chat_config = ChatConfig.create().with_speculation_preset(ChatSpeculationPreset.Summarization())

session = await engine.chat(model, chat_config)

chat_reply_config = ChatReplyConfig.create().with_token_limit(256).with_sampling_method(SamplingMethod.Greedy())

replies = await session.reply(messages, chat_reply_config)

if replies:

reply = replies[0]

print(f"Summary: {reply.message.text}")

print(f"Generation t/s: {reply.stats.generate_tokens_per_second}")

if __name__ == "__main__":

asyncio.run(main())

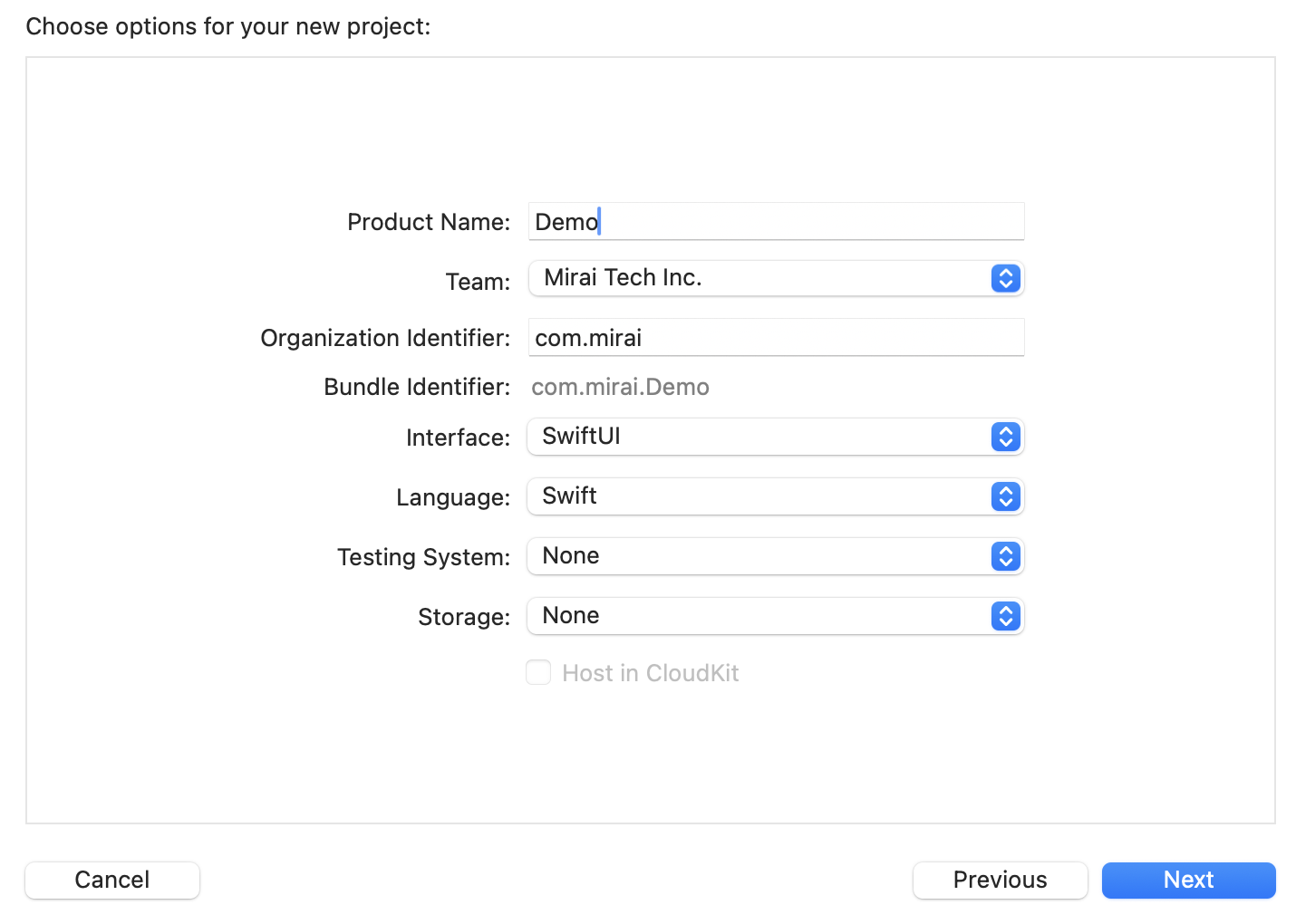

Paste the snippet

import Uzu

public func runChatSpeculationSummarization() async throws {

let engineConfig = EngineConfig.create()

let engine = try await Engine.create(config: engineConfig)

guard let model = try await engine.model(identifier: "Qwen/Qwen3-0.6B") else {

return

}

for try await update in try await engine.download(model: model).iterator() {

print("Download progress: \(update.progress())")

}

let textToSummarize = "A Large Language Model (LLM) is a type of artificial intelligence that processes and generates human-like text. It is trained on vast datasets containing books, articles, and web content, allowing it to understand and predict language patterns. LLMs use deep learning, particularly transformer-based architectures, to analyze text, recognize context, and generate coherent responses. These models have a wide range of applications, including chatbots, content creation, translation, and code generation. One of the key strengths of LLMs is their ability to generate contextually relevant text based on prompts. They utilize self-attention mechanisms to weigh the importance of words within a sentence, improving accuracy and fluency. Examples of popular LLMs include OpenAI's GPT series, Google's BERT, and Meta's LLaMA. As these models grow in size and sophistication, they continue to enhance human-computer interactions, making AI-powered communication more natural and effective.";

let prompt = "Text is: \"\(textToSummarize)\". Write only summary itself."

let messages = [

ChatMessage.system().withReasoningEffort(reasoningEffort: .disabled),

ChatMessage.user().withText(text: prompt)

]

let chatConfig = ChatConfig.create().withSpeculationPreset(speculationPreset: .summarization)

let session = try await engine.chat(model: model, config: chatConfig)

let chatReplyConfig = ChatReplyConfig.create().withTokenLimit(tokenLimit: 256).withSamplingMethod(samplingMethod: .greedy)

let replies = try await session.reply(input: messages, config: chatReplyConfig)

guard let reply = replies.last else {

return

}

print("Summary: \(reply.message.text() ?? "empty")")

print("Generation t\\s: \(reply.stats.generateTokensPerSecond ?? 0.0)")

}

Initialize a tsconfig.json

{

"compilerOptions": {

"target": "es2020",

"module": "commonjs",

"moduleResolution": "node",

"strict": true,

"esModuleInterop": true,

"outDir": "dist",

"types": [

"node"

]

},

"include": [

"*.ts"

]

}

Create main.ts

import { ChatConfig, ChatMessage, ChatReplyConfig, ChatSpeculationPresetSummarization, Engine, EngineConfig, ReasoningEffort, SamplingMethodGreedy } from '@trymirai/uzu';

async function main() {

let engineConfig = EngineConfig.create();

let engine = await Engine.create(engineConfig);

let model = await engine.model('Qwen/Qwen3-0.6B');

if (!model) {

throw new Error('Model not found');

}

for await (const update of await engine.download(model)) {

console.log('Download progress:', update.progress);

}

const textToSummarize =

"A Large Language Model (LLM) is a type of artificial intelligence that processes and generates human-like text. It is trained on vast datasets containing books, articles, and web content, allowing it to understand and predict language patterns. LLMs use deep learning, particularly transformer-based architectures, to analyze text, recognize context, and generate coherent responses. These models have a wide range of applications, including chatbots, content creation, translation, and code generation. One of the key strengths of LLMs is their ability to generate contextually relevant text based on prompts. They utilize self-attention mechanisms to weigh the importance of words within a sentence, improving accuracy and fluency. Examples of popular LLMs include OpenAI's GPT series, Google's BERT, and Meta's LLaMA. As these models grow in size and sophistication, they continue to enhance human-computer interactions, making AI-powered communication more natural and effective.";

const prompt = `Text is: "${textToSummarize}". Write only summary itself.`;

let messages = [

ChatMessage.system().withReasoningEffort("Disabled" as ReasoningEffort),

ChatMessage.user().withText(prompt)

];

let chatConfig = ChatConfig.create().withSpeculationPreset(new ChatSpeculationPresetSummarization);

let session = await engine.chat(model, chatConfig);

let chatReplyConfig = ChatReplyConfig.create().withTokenLimit(256).withSamplingMethod(new SamplingMethodGreedy());

let reply = (await session.reply(messages, chatReplyConfig))[0];

if (reply) {

console.log('Summary: ', reply.message.text);

console.log('Generation t\\s: ', reply.stats.generateTokensPerSecond);

}

}

main().catch((error) => {

console.error(error);

});

Install dependencies

cargo add uzu --git https://github.com/trymirai/uzu

cargo add tokio --features full

Paste into src/main.rs

use uzu::{

engine::{Engine, EngineConfig},

types::{

basic::{ReasoningEffort, SamplingMethod},

session::chat::{ChatConfig, ChatMessage, ChatReplyConfig, ChatSpeculationPreset},

},

};

#[tokio::main]

async fn main() -> Result<(), Box<dyn std::error::Error>> {

let engine_config = EngineConfig::default();

let engine = Engine::new(engine_config).await?;

let model = engine.model("Qwen/Qwen3-0.6B".to_string()).await?.ok_or("Model not found")?;

let downloader = engine.download(&model).await?;

while let Some(update) = downloader.next().await {

println!("Download progress: {}", update.progress());

}

let text_to_summarize = "A Large Language Model (LLM) is a type of artificial intelligence that processes and generates human-like text. \

It is trained on vast datasets containing books, articles, and web content, allowing it to understand and predict language patterns. \

LLMs use deep learning, particularly transformer-based architectures, to analyze text, recognize context, and generate coherent responses. \

These models have a wide range of applications, including chatbots, content creation, translation, and code generation. \

One of the key strengths of LLMs is their ability to generate contextually relevant text based on prompts. \

They utilize self-attention mechanisms to weigh the importance of words within a sentence, improving accuracy and fluency. \

Examples of popular LLMs include OpenAI's GPT series, Google's BERT, and Meta's LLaMA. \

As these models grow in size and sophistication, they continue to enhance human-computer interactions, \

making AI-powered communication more natural and effective.";

let prompt = format!("Text is: \"{text_to_summarize}\". Write only summary itself.");

let messages = vec![

ChatMessage::system().with_reasoning_effort(ReasoningEffort::Disabled),

ChatMessage::user().with_text(prompt),

];

let chat_config = ChatConfig::default().with_speculation_preset(Some(ChatSpeculationPreset::Summarization {}));

let session = engine.chat(model, chat_config).await?;

let chat_reply_config =

ChatReplyConfig::default().with_token_limit(Some(256)).with_sampling_method(SamplingMethod::Greedy {});

let replies = session.reply(messages, chat_reply_config).await?;

if let Some(reply) = replies.first() {

println!("Summary: {}", reply.message.text().unwrap_or_default());

println!("Generation t/s: {}", reply.stats.generate_tokens_per_second.unwrap_or_default());

}

Ok(())

}

You will notice that the model’s run count is lower than the actual number of generated tokens due to speculative decoding, which significantly improves generation speed.